NN : Forward and Back Propagation MCQs & Program

NN : Forward and Back Propagation

Q1. Sigmoid and

softmax functions

Which of the following

statements is true for a neural network having more than one output neuron ?

Choose the correct answer

from below:

A. In a neural network where the output neurons have the sigmoid activation, the sum of all the outputs from the neurons is always 1.

B. In a neural network where the output neurons have the sigmoid activation, the sum of all the outputs from the neurons is 1 if and only if we have just two output neurons.

C. In a neural network where the output neurons have the softmax activation, the sum of all the outputs from the neurons is always 1.

D. The softmax function is a special case of the sigmoid function

Ans: C

- For the sigmoid activation, when we

have more than one neuron, it is possible to have the sum of outputs from

the neurons to have any value.

- The softmax classifier outputs the

probability distribution for each class, and the sum of the probabilities

is always 1.

- The Sigmoid function is

the special case of the Softmax function where the number

of classes is 2.

Q2. Forward propagation

Given the independent and

dependent variables in X and y, complete the code

to calculate the results of the forward propagation for

a single neuron on each observation of the dataset.

The code should print the

calculated labels for each observation of the given dataset i.e. X.

Input Format:

Two lists are taken as

the inputs. First list should be the independent variable(X) and the second

list should be the dependent variable(y)

Output Format:

A numpy array consisting

of labels for each observation.

Sample Input:

Sample Output:

Fill the Missing Code

import

numpy as np

np.random.seed(2)

#independent variables

X

= np.array(eval(input()))

#dependent

variable

y

= np.array(eval(input()))

m = X.shape[__] #no. of samples

n

= X.shape[__] #no. of features

c

= #no. of classes in the data and therefore no. of neurons in the

layer

#weight

vector of dimension (number of features, number of neurons in the layer)

w

= np.random.randn(___, ___)

#bias

vector of dimension (1, number of neurons in the layer)

b

= np.zeros((___, ___))

#(weighted

sum + bias) of dimension (number of samples, number of classes)

z

= ____

#exponential transformation of z

a

= np.exp(z)

#Perform the softmax on a

a

= ____

#calculate the label for each observation

y_hat

= ____

print(y_hat)

Final Code:

import

numpy as np

np.random.seed(2)

#independent variables

X

= np.array(eval(input()))

#dependent

variable

y

= np.array(eval(input()))

#‘m’

and ‘n’ refers to the no. of rows and columns in the dataset respectively.’c’

refers to the number of classes in y.

m = X.shape[0] #no. of samples

n

= X.shape[1] #no. of features

c

= len(np.unique(y)) #no. of classes in the data and therefore no. of

neurons in the layer

#Initializing

weights randomly

#weight

vector of dimension (number of features, number of neurons in the layer)

w

= np.random.randn(n, c)

#Initializing

biases as zero

#bias vector of dimension (1, number of neurons in the layer)

b

= np.zeros((1, c))

#Finding

the output ‘z’

#(weighted sum + bias) of dimension (number of samples, number of classes)

z

= np.dot(X, w) + b

#Applying

the softmax activation function on the output

#exponential transformation of z

a

= np.exp(z)

a

= a/np.sum(a, axis = 1, keepdims = True)

#Calculating

the label for each observation

y_hat = np.argmax(a, axis = 1)

print(y_hat)

Q3. Same layer still

different output

Why do two neurons in the

same layer produce different outputs even after using the same kind of function

(i.e. wT.x + b)?

Choose the correct answer

from below:

A. Because the weights are not the same for the neurons.

B. Because the input for each neuron is different.

C. Because weights of all neurons are updated using different learning rates.

D. Because only biases (b) of all neurons are different, not the weights.

Ans: A

- The weights for each neuron in a

layer are different. Thus the output of each neuron ( wT.x + b ) will be

different

- The input for each neuron in a layer

is the same. In a fully connected network, each neuron in a particular

layer gets inputs from each neuron in the previous layer.

- There may be different learning rates

for each model weight depending on the type of optimizer used, but that is

not the reason for the neurons to give different outputs.

- In a fully connected network, each

neuron has two trainable parameters : a bias and a weight. The values of

bias and weight for any two neurons in a layer need not be the same, since

they keep changing during the model training.

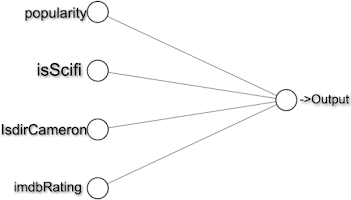

Q4. Will he watch

the movie?

We want to predict

whether a user would watch a movie or not. Each movie has a certain number of

features, each of which is explained in the image.

Now take the case of the

movie Avatar having the features vector as [9,1,0,5].

According to an algorithm, these features are assigned the weights [0.8,0.2,0.5,0.4] and bias=-10.

For a user X, predict whether he will watch the movie or not if the threshold

value(θ) is 10?

Note:

If the output of the neuron is greater than θ then the user will watch the

movie otherwise not.

Choose the correct answer

from below:

A. Yes, the user will watch the movie with neuron output = -0.6

B. No, the user will not watch the movie with neuron output = -0.6

C. No, the user will watch the movie with neuron output = 2.5

D. Yes, the user will watch the movie with neuron output = 2.5

Ans: B

Correct option :No,

the user will not watch the movie with neuron output with -0.6

Explanation :

The output of a neuron is

obtained by taking the weighted sum of inputs and adding the bias term to it.

Q5. Dance festival

The dance festival is in

this coming weekend and you like dancing as everyone does. You want to decide

whether to go to the festival or not. And the decision depends on the stated

three factors: ( x1, x2, x3 are boolean variables )

- x1: Is the

weather good? (x1=1 means good)

- x2: Will your

friend accompany you? (x2=1 means she will accompany)

- x3: Is the

festival near public transit? (x3=1 means ‘Yes’)

Output:

0:

if the ∑inwixi<threshold

1: if the ∑inwixi≥threshold}

Which of the given

options will be the values of the correct weights for deciding with threshold=5

using the above rules, given the condition that you won't go unless the weather

is good, and will definitely go if the weather is good?

Choose the correct answer

from below:

A. [6,2,2]

B. [4,2,4]

C. [3,2,1]

D. [4,2,2]

Ans: A

Correct option : [6,2,2]

Explanation :

Other options don’t give

these desired outputs

Q6. Value of weight

While training a simple

neural network, this is what we get with the given input:

then what value of W will

satisfy the actual output values given that bias equals

to 1.

Choose the correct answer

from below:

A. 2

B. 5

C. 10

D. 7

Ans: A

Correct option : 2

Explanation:

- This problem can be solved by simple

substitution in equations. It is given that bias is equals to 1. And W is

same for all of them.

- We want to find the value W which

gives the results as the actual output given in the table.

Hence, for input = 1,

- w×1+1=3, which

gives the value of W = 2

Similarly for input

as 2, we will get

- w×2+1=5, again

the value of W = 2

And finally when input

= 4,

- w×4+1=9, again

the value of W = 2

Therefore 2 is the

correct answer

Q7. Vectorize

Consider the following

code snippet:

Note:

All x, y and z are NumPy arrays.

Choose the correct answer

from below:

A. z = x + y

B. z= x * y.T

C. z = x + y.T

D. z = x.T + y.T

Ans: C

Correct option

: z=x+y.T

Explanation :

Thus the answer is

z=x+y.T

Q8. Identify the

Function

Mark the correct option

for the below-mentioned statements:

(a) It

is possible for a perceptron that it adds up all the weighted inputs it

receives, and if the sum exceeds a specific value, it outputs a 1. Otherwise,

it just outputs a 0.

(b) Both

artificial and biological neural networks learn from past experiences.

Choose the correct answer

from below:

A. Both the mentioned statements are true.

B. Both the mentioned statements are false.

C. Only statement (a) is true.

D. Only statement (b) is true.

Ans: A

Correct option:

Both the statements are true.

Explanation :

Implementation of

statement (a) is called step function and yes it is possible.

Q9. Find the Value

of 'a'

Given below is a neural

network with one neuron that takes two float numbers as inputs.

If the model uses the

sigmoid activation function, What will be the value of 'a' for the given

x1 and x2 _____(rounded off to 2 decimal places)?

Choose the correct answer

from below:

A. 0.57

B. 0.22

C. 0.94

D. 0.75

Ans: A

Correct option :

- 0.57

Explanation :

The value of z will

be :

- z= w1.x1+w2.x2+b

- z = (0.5×0.55) + (−0.35×0.45) + 0.15 = 0.2675

The value of a will

be :

- a= f(z) = σ(0.2675) = 1/(1+e(−z))=1.7652901=0.5664=0.57

Comments

Post a Comment